It is often said that large language models (LLMs) along the lines of OpenAI’s ChatGPT are a black box, and there is certainly some truth to that. Even for data scientists, it’s hard to know why a model always behaves the way it does, as if it’s making up facts from a whole.

In an effort to peel back the layers of LLMs, OpenAI is to develop a tool to automatically identify which parts of an LLM are responsible for which behavior. The engineers behind it stress that it’s in its early stages, but the code to run it is available in open source on GitHub as of this morning.

‘We’re trying [develop ways to] anticipate what the problems with an AI system will be,” William Saunders, the interpretability team manager at OpenAI, told ukbusinessupdates.com in a phone interview. “We really want to know that we can trust what the model is doing and the response it produces.”

To that end, OpenAI’s tool uses a language model (ironically) to figure out the functions of the components of other, architecturally simpler LLMs – most notably OpenAI’s own GPT-2.

OpenAI’s tool attempts to simulate the behavior of neurons in an LLM.

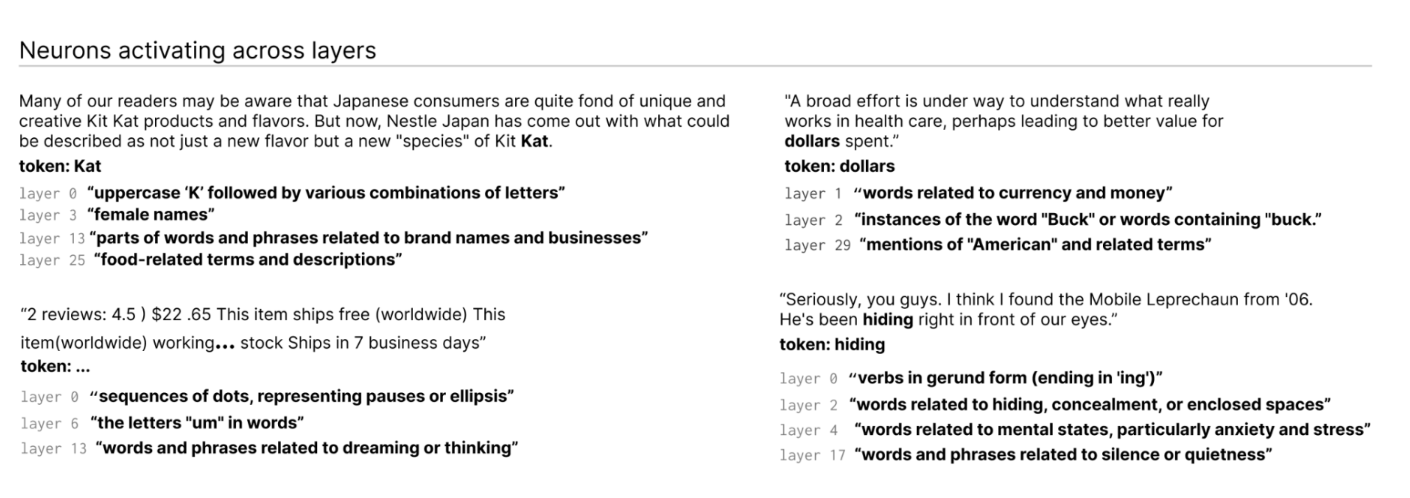

How? First, a brief explanation of LLMs for background information. Like the brain, they are made up of “neurons,” which perceive a specific pattern in the text to influence what the general model “says” next. For example, if a prompt is given about superheroes (e.g. “Which superheroes have the most useful superpowers?”), a “Marvel superhero neuron” could increase the model’s likelihood of naming specific superheroes from Marvel movies.

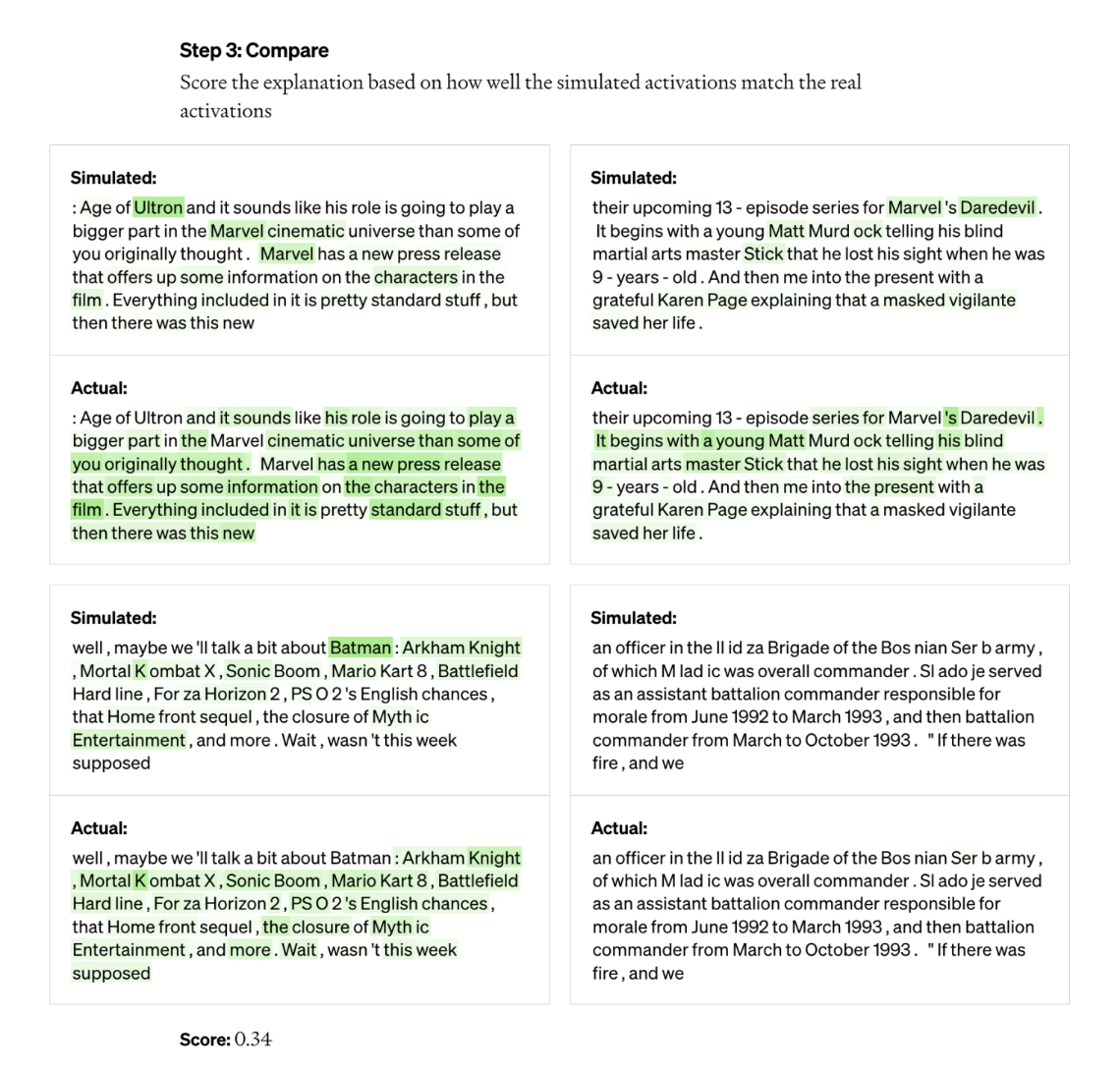

OpenAI’s tool uses this arrangement to break models down into their separate pieces. First, the tool runs text strings through the model under evaluation and waits for instances where a particular neuron is “activated” regularly. Then GPT-4, OpenAI’s latest text-generating AI model, “shows” these highly active neurons and allows GPT-4 to generate a statement. To determine how accurate the explanation is, the tool provides GPT-4 with text strings and lets it predict, or simulate, how the neuron would behave. In then compares the behavior of the simulated neuron with the behavior of the actual neuron.

“Using this methodology, we can basically come up with some sort of preliminary natural language explanation for each individual neuron for what it’s doing and also have a score for how well that explanation matches the actual behavior,” Jeff Wu, who leads the scalable tuning team, said. from OpenAI. “We use GPT-4 as part of the process to provide explanations of what a neuron is looking for and then assess how well those explanations match the reality of what it is doing.”

The researchers were able to generate explanations for all 307,200 neurons in GPT-2, which they collected in a dataset released along with the tool code.

Such tools could one day be used to improve the performance of an LLM, the researchers say, for example to reduce bias or toxicity. But they recognize it has a long way to go before it’s actually usable. The tool was confident in its explanations for about 1,000 of those neurons, a small fraction of the total.

A cynical person might also argue that the tool is essentially an advertisement for GPT-4, since GPT-4 is required to work. Other LLM interpretation tools rely less on commercial APIs, such as DeepMind’s Tracra compiler that translates programs into neural network models.

Wu said this is not the case – the fact that the tool uses GPT-4 is only “incidental” – and on the contrary demonstrates GPT-4’s weaknesses in this area. He also said it was not created with commercial applications in mind and could theoretically be adapted to use LLMs in addition to GPT-4.

The tool identifies neurons that are activated across layers in the LLM.

“Most of the explanations score quite poorly or don’t explain as much of the behavior of the actual neuron,” Wu said. “For example, many of the neurons are active in a way that it’s really hard to tell what’s going on — like they’re firing on five or six different things, but there’s no discernible pattern. Sometimes there is an observable pattern, but GPT-4 cannot find it.”

That is to say nothing about more complex, newer and larger models, or models that can search the Internet for information. But on that second point, Wu believes web browsing wouldn’t change much of the tool’s underlying mechanisms. It can be easily modified, he says, to figure out why neurons decide to perform certain search engine queries or visit certain websites.

“We hope this opens a promising avenue for addressing interpretability in an automated way that others can build on and contribute to,” Wu said. “The hope is that we have really good explanations not just of what neurons respond to, but generally the behavior of these models — what kind of circuits they compute and how certain neurons affect other neurons.”